Use Case

The Project is split into three different Use Cases which are:

- The base concept of this use case is to create an environment where the MBOTs are able to move a certain distance in a certain direction. This could either be movement for a given distance, like for example 1 Meter forward, or for a given Time, e.g. moving for 5 seconds in a given direction. A Website will be created to control the start of the MBOTs via the press of a Button. The abilities of this script will be expanded in later use cases. The Website should work as kind of a command centre for the MBOTs. To directly control the MBOTs a REST-Service is being used. This Service will be written in Java and will take the input from the Website/REST to control the MBOTs. Two cars will be used in all of the use cases although the scenario could be expanded to more vehicles if deemed necessary.

- The MBOTs can be controlled by the Website, which means that they move a predefined distance, and movement commands are sent using a REST-Service. Goal of use case 2 is to introduce collision detection to the MBOTs. They should be able to detect another MBOT that is on a collision course with them and should initiate an evasive manoeuvre to prevent a collision.This will be done by using a motion sensor. The motion sensor will be placed on the front of one of the MBOTs and when it detects a movement (for example a drastic change in the ultrasonic level) it will start an evasive manoeuvre by either stopping, turning and moving or by taking a curve (as it was programmed in use case 1). The bot can then continue driving (for example if the driving time was set to 10 seconds) or stop (after driving a safe distance to avoid the collision with the other MBOT). Both MBOT will drive the same “road” and are in direct collision course. It is also possible to just place one of the MBOT on one side and let the other drive directly into him.

- Now that collisions between MBOTs can be evaded the functionality can be expanded. Therefore, use case 3 will be used to implement Sound-Sensors for both cars. Using those the cars can react to sound cues that will be done by a person (e.g. a clap) or (if possible) they can even react on the sound that another MBOT produces and then react to it to avoid a collision (assuming the bots are moving in a line as otherwise it would be very tough to predict where the other MBOT would be). It is also possible to create more complex movements using the Sound-Sensors as it is not required for the MBOTs to face each other anymore. Now if a sound will be recognized from one bot it will stop immediately and send out a sound also to indicate to other MBOTs around to also stop their movements.

Experiment

Use Case 1:

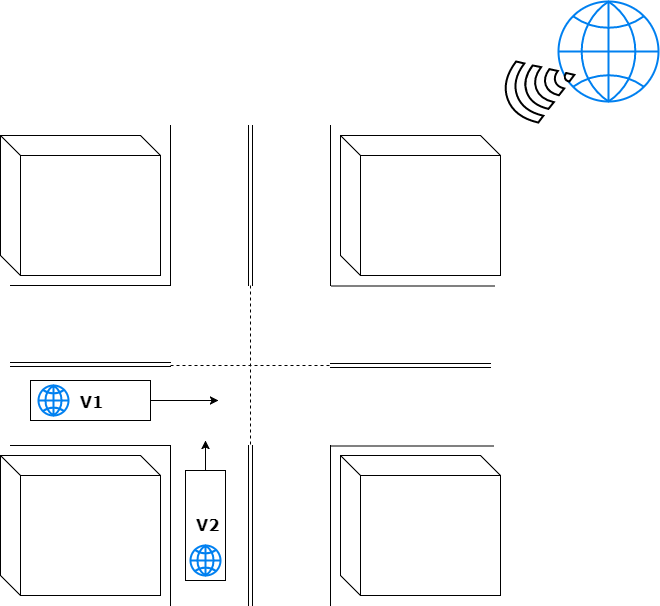

The base concept of this use case is to create an environment where the MBOTs are able to move a certain distance in a certain direction. This could either be movement for a given distance, like for example 1 Meter forward, or for a given Time, e.g. moving for 5 seconds in a given direction.

A Website will be created to control the start of the MBOTs via the press of a Button. The abilities of this script will be expanded in later use cases. The Website should work as kind of a command centre for the MBOTs.

To directly control the MBOTs a REST-Service is being used. This Service will be written in Java and will take the input from the Website/REST to control the MBOTs. Two cars will be used in all of the use cases although the scenario could be expanded to more vehicles if deemed necessary.

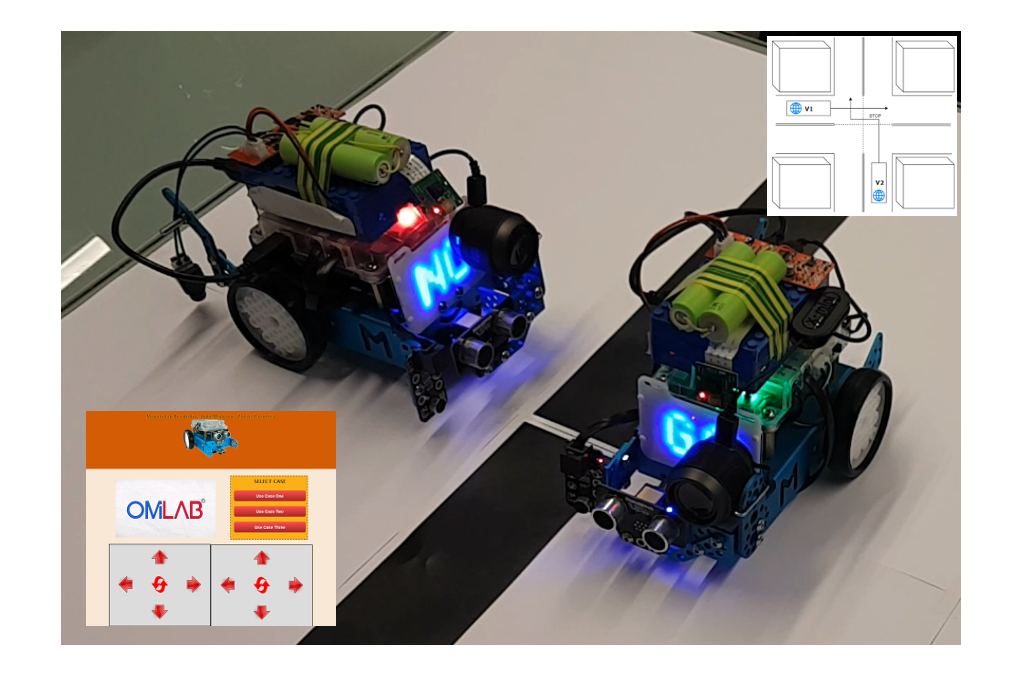

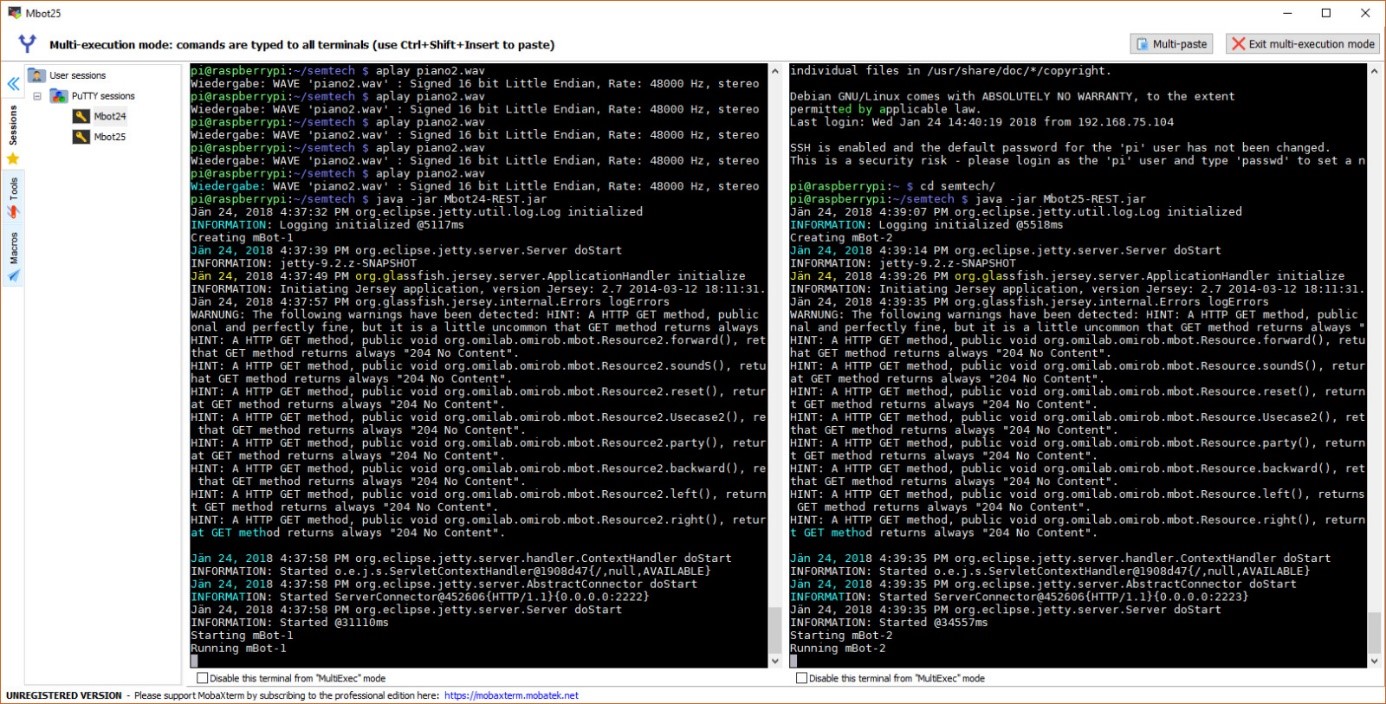

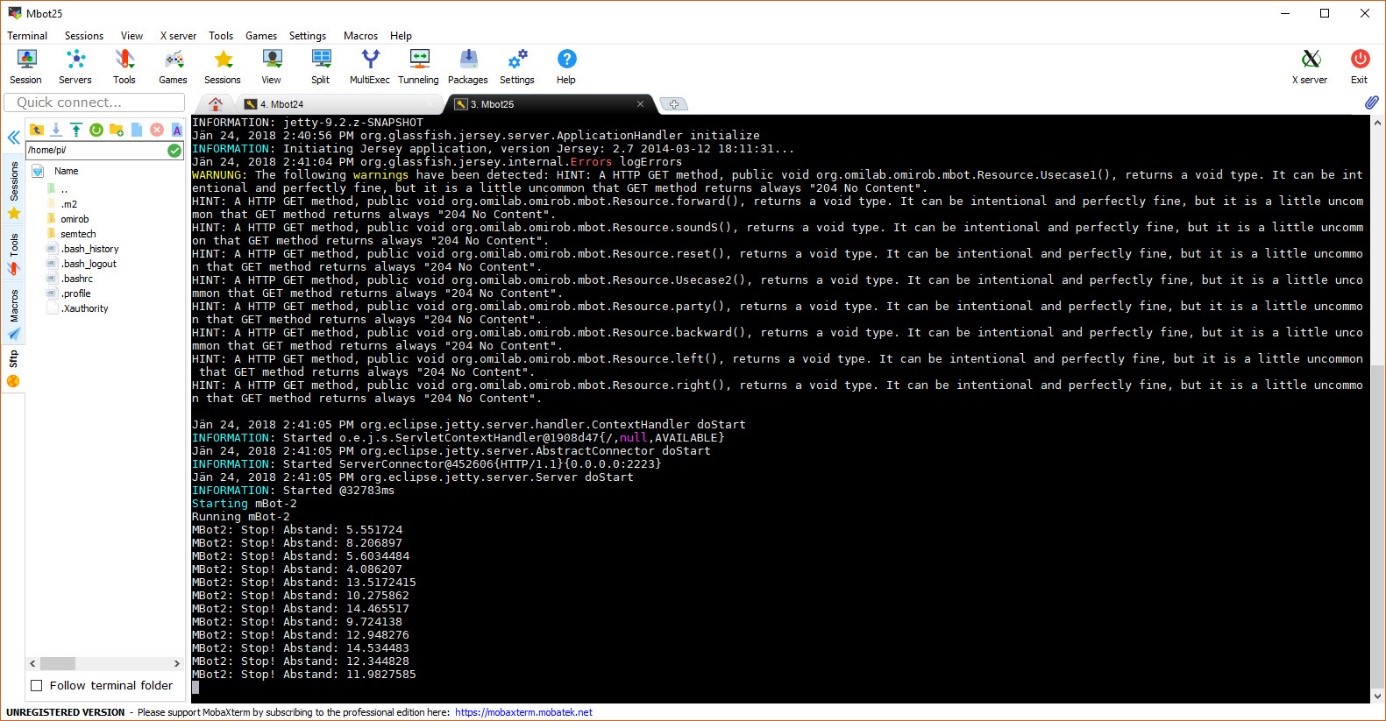

The picture above shows that both MBOTs are working and that the jar files on the Raspberry Pi-s are running, meaning that their local servers are on standby until called up. This allows us to call the appropriate REST service for every MBOT individually. The description of how the code works and how to properly work with the MBOTs can be found in the code itself as comments.

Use Case 2:

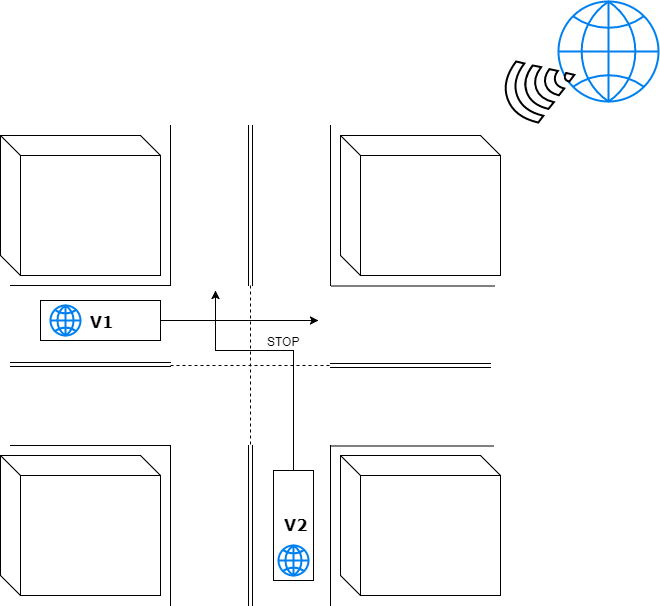

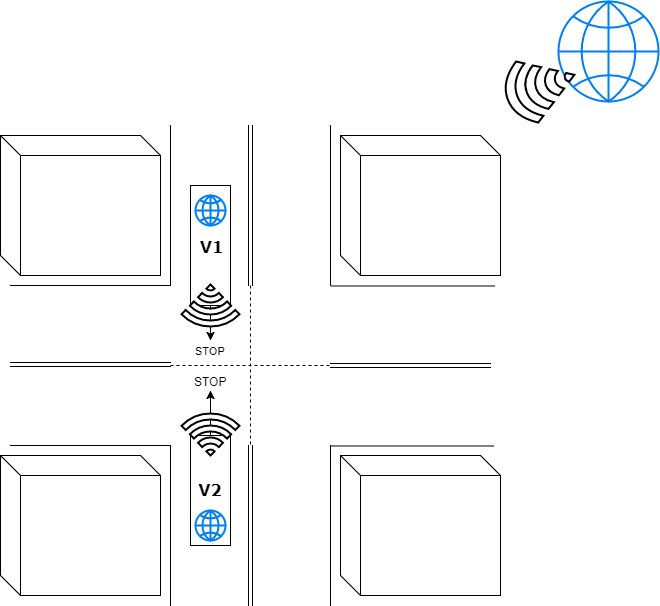

The MBOTs can be controlled by the Website, which means that they move a predefined distance, and movement commands are sent using a REST-Service. Goal of use case 2 is to introduce collision detection to the MBOTs. They should be able to detect another MBOT that is on a collision course with them and should initiate an evasive manoeuvre to prevent a collision.

This will be done by using a motion sensor. The motion sensor will be placed on the front of one of the MBOTs and when it detects a movement (for example a drastic change in the ultrasonic level) it will start an evasive manoeuvre by either stopping, turning and moving or by taking a curve (as it was programmed in use case 1). The bot can then continue driving (for example if the driving time was set to 10 seconds) or stop (after driving a safe distance to avoid the collision with the other MBOT).

Both MBOT will drive the same “road” and are in direct collision course. It is also possible to just place one of the MBOT on one side and let the other drive directly into him.

The picture of the terminal above shows the MBOT-2 using the ultrasonic sensor to detect the MBOT-1 and stops when the distance between them is closer than a certain point. In the simulation we show this by letting the MBOTs start driving at the same time and one driving slightly faster then the other. This was MBOT-2 will detect an obstacle in its patch and start moving around the obstacle.

Use Case 3:

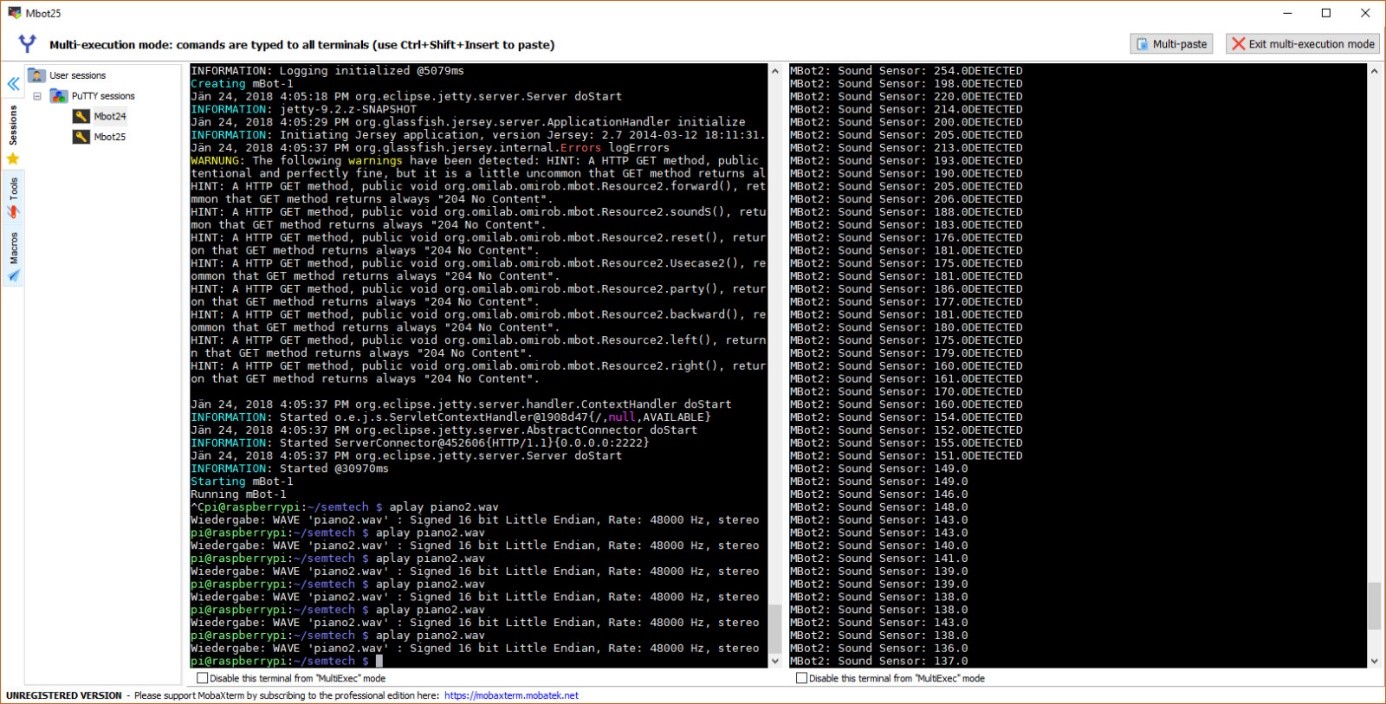

Now that collisions between MBOTs can be evaded the functionality can be expanded. Therefore, use case 3 will be used to implement Sound-Sensors for both cars. Using those the cars can react to sound cues that will be done by a person (e.g. a clap) or (if possible) they can even react on the sound that another MBOT produces and then react to it to avoid a collision (assuming the bots are moving in a line as otherwise it would be very tough to predict where the other MBOT would be).

It is also possible to create more complex movements using the Sound-Sensors as it is not required for the MBOTs to face each other anymore. Now if a sound will be recognized from one bot it will stop immediately and send out a sound also to indicate to other MBOTs around to also stop their movements.

The Website will also be extended with more functionality. This would be the ability to turn sensors on and off (e.g. turning off the brightness sensors if they are not needed at the moment to prevent unwanted behaviour), the ability to create a sound (a loudspeaker on the MBOT).

Additionally, the Rule engine will be enhanced with new rules to accompany the changes and additional sensors that have been added to the MBOTs. This enables the MBOTs to really adjust to sounds that are registered and to use the right response (e.g. stopping and sending a signal themselves (as a bonus delivery))

On the right window we can see that the Sound sensor from MBOT-2 is detecting the music that is been emitted from the Speaker of the MBOT-1. With this data there can be done extensions of this projects.

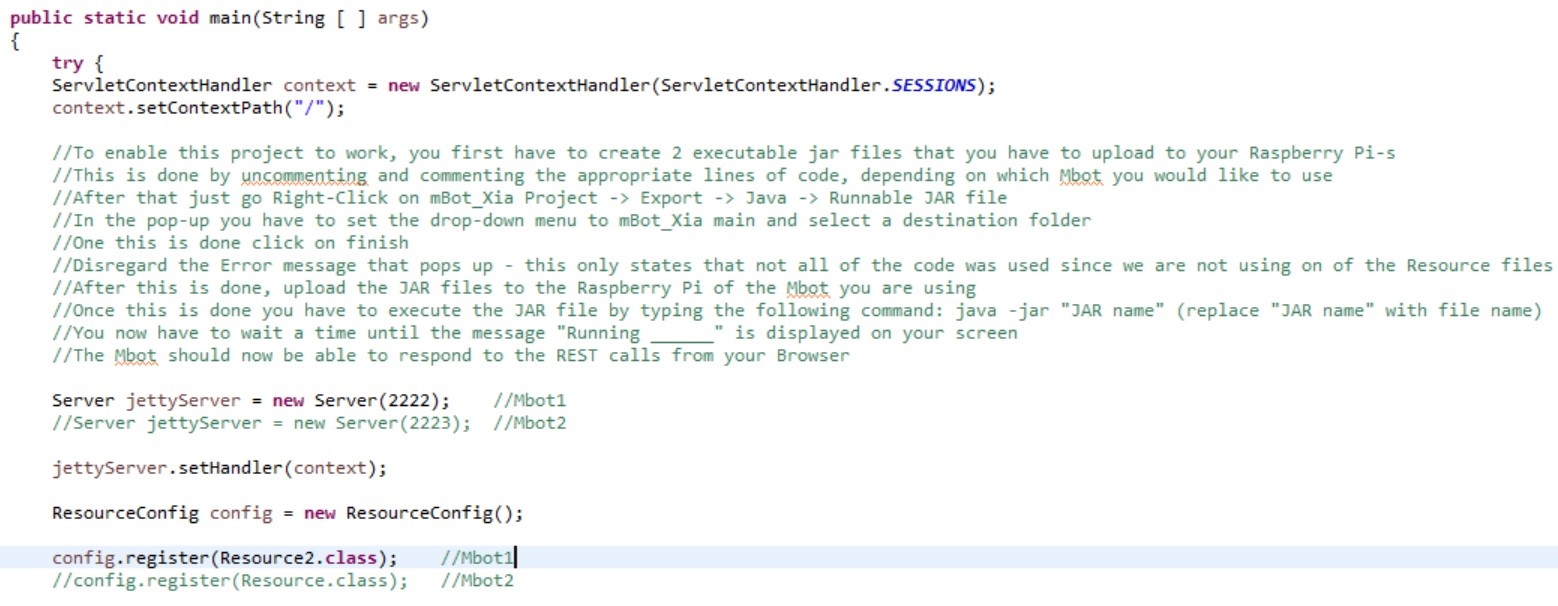

The picture above shows how to properly enable the project to work on the MBOTs. It is also written in the source code and it contains all the appropriate steps needed.

Results

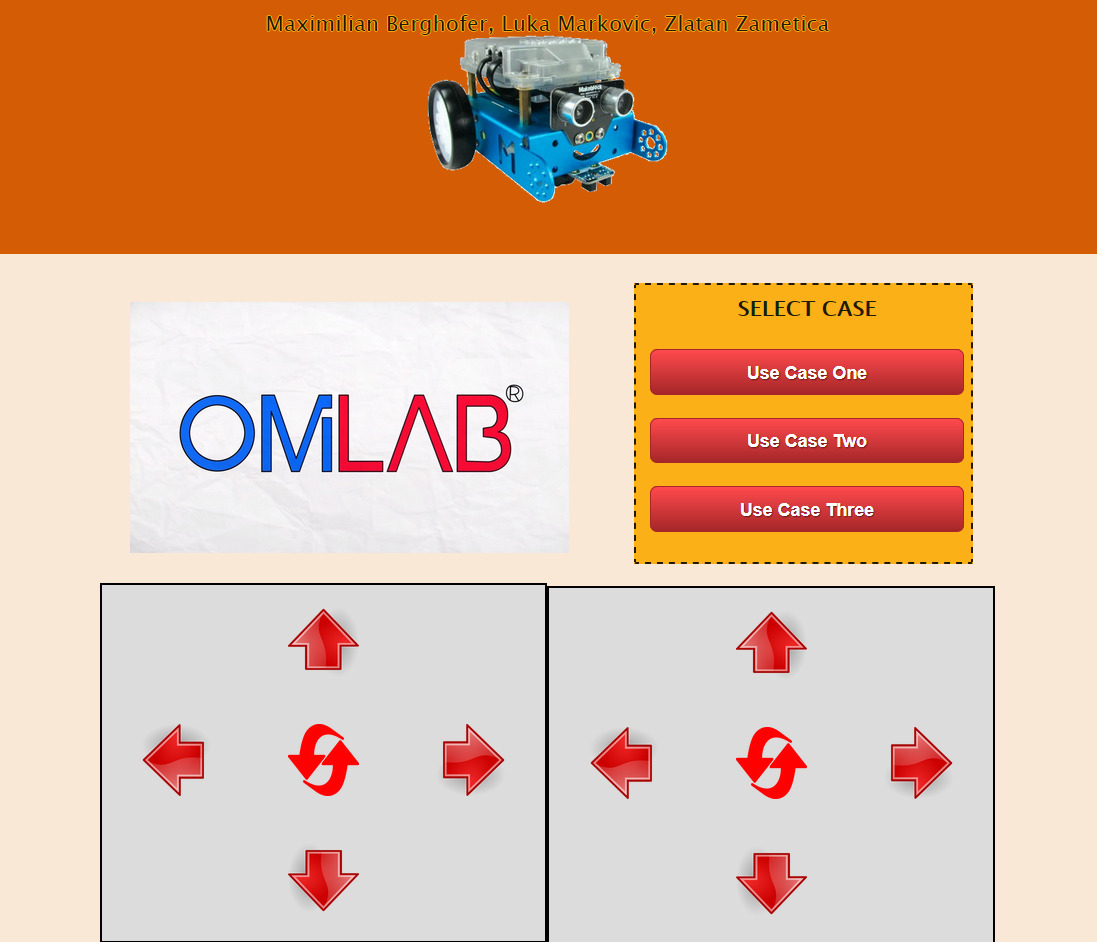

The following videos show the MBOTs in action as they move using the controls that were built into the website. Those trigger REST Calls which will be sent to the MBOTs. Bots can be controlled directly using the controlling elements or by using the Use Case buttons which will trigger a Use Case scenario.

The OMILAB sign has a special feature called the “Party Mode” to test that both MBOTs are working correctly. This is done to see if the motors are running in sync, if sounds can be produced and if the RGB lights function correctly. On the right from the OMILAB sign there are the start buttons for the three use cases. Beneath are the two grey boxes with are used to manually move the MBOTs (left box for MBOT-1 and the right for MBOT-2). The sign in the middle of each box is the RESET button, to reset the MBOTs if something unusual is happening (for example, the MBOTs are coming close to the edge of the table).

The following two video show Use Case 1 where the Bots move using the REST Calls.

This video shows the collision prevention scenario where the paths of both MBOTs cross and one of them has to evade the other to avoid a collision.

Now we show the sound sensor of one MBOT registering the sounds that were created by the other MBOT using his loudspeaker.

By clicking the OMiLAB Logo the MBOTs will start their “party mode” where the motors are checked for proper functionality