Use Case

The goal of this project is to support the interaction between a person and a cyber-physical system with the help of the NAO.

The NAO should serve as a smart assistant, which not only supports the interaction between a person and a CPS, but also helps the user to orient oneself within the cyber-physical system.

For instance, if the user does not know the capabilities of the current CPS is, the NAO should provide the necessary information about the capabilities, likewise about every robot, which is connected to the current cyber-physical system.

The NAO should not only provide information about the capabilities of the environment, but also give warnings or error messages if something is not working accordingly. According to this knowledge the user can start planning tasks, which are possible to accomplish within the cyber-physical system and execute them.

All in all the NAO should act as a mediator between the user and the cyber-physical system.

Within the scope of this experiment a certain use case was implemented, where it is assumed that the user is currently working with a functional CPS.

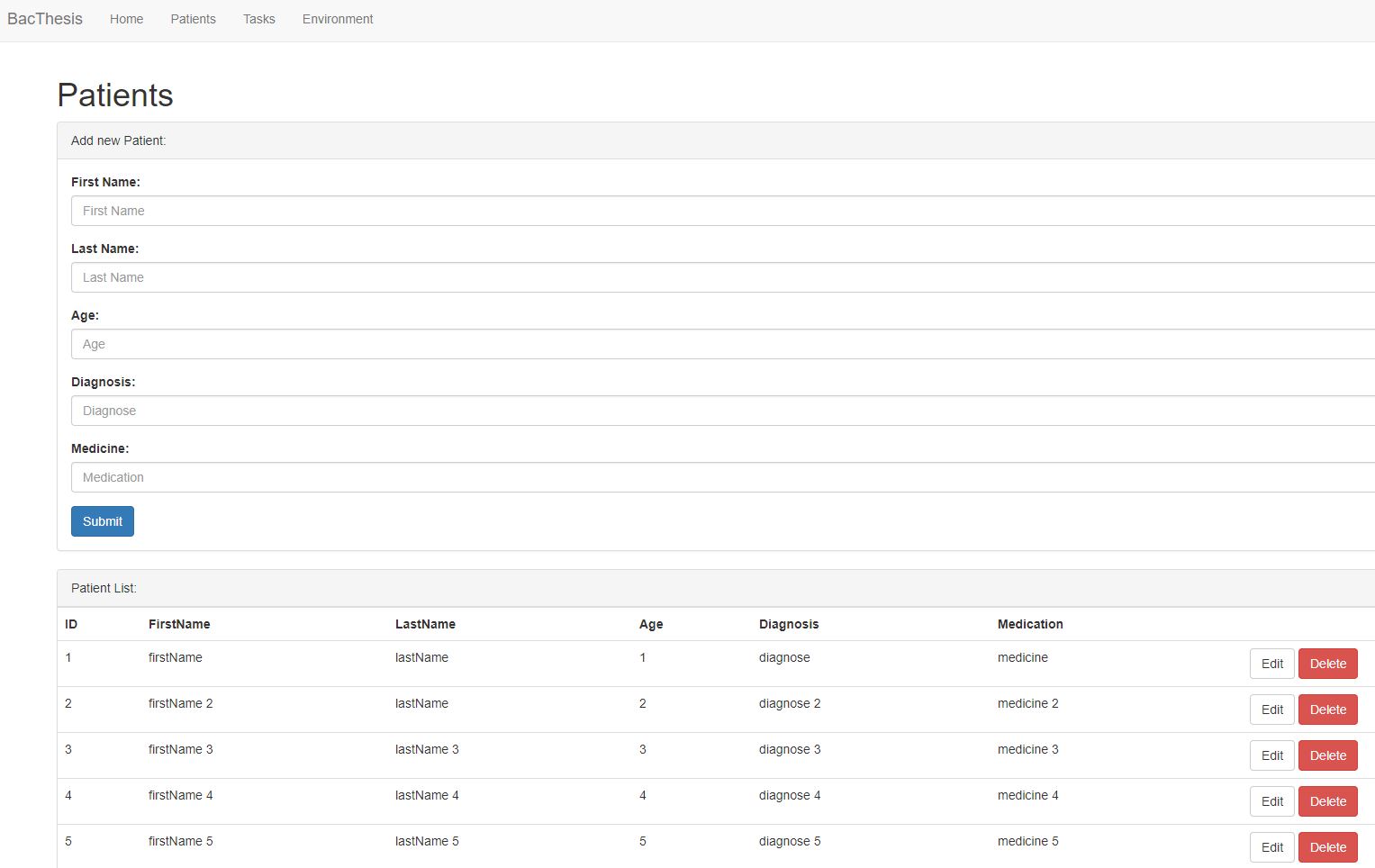

This use case will handle the automatic distribution of medication in hospitals or retirement homes with self driving robots. The user will be able to add, update or delete data about the patients, tasks and environments, through a webinterface. Furthermore the NAO will provide information about the current tasks, available robots within the cyber-physical system and comment if any of the tasks are started.

There are serveral ways to interact with the NAO:

- touch front sensor of the head

- touch rear sensor of the head

- touch either the right or left backhand

- say the keywords “environment” and “task”

- start tasks through the webinterface

Experiment

For this experiment the University of Vienna was providing one of its two NAOs.

Within the scope of this experiment the speakers, the sensors on its head and the sensors on its hands were utilized but also the microphones for the voice recognition module of the NAO. The access of the capabilities of the NAO was simplified through the offered library of Softbank Robotics, which also offers documentation of each API.

For this experiment the APIs,

- ALMemory,

- ALTextToSpeech and

- ALSpeechRecognition were used.

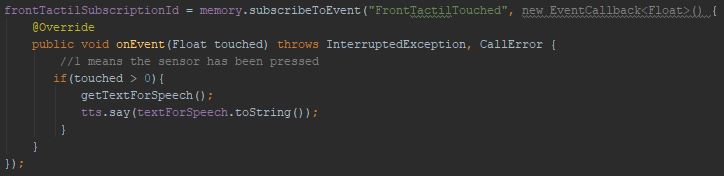

To let the NAO speak when one of the sensors was touched, it was necessary to use the service of ALMemory to subscribe an event and override the function “onEvent” as seen below:

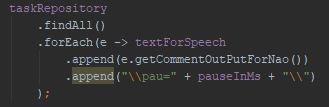

The code above will be executed, when the sensor on the front part of the head of the NAO is touched. The implementation of the other sensors are the same except for the code of the “onEvent” method. And to let the NAO speak the ALTextToSpeech was used (variable tts, method say). Unfortunately the NAO speaks rather fast, without any pause, therefore it is not really comprehensible, if the NAO speaks to many tasks at once. Fortunately there exists special tags which are within the String of text as seen below:

The code above searches for every text of each task and appends the special tag, for pausing a certain amount of time, before it continues to speak. In this case the tag \\pau=…\\ was used.

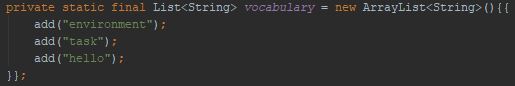

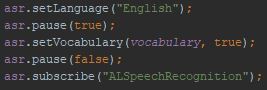

To use the voice recognition API of the NAO a vocabulary for the NAO has to be defined, as seen below:

Furthermore it has to be registered to the ALSpeechRecognition API, by simply using the method setVocabulary, as seen below:

The code snippet above also sets the Language. The NAO offers several languages, which can be installed.

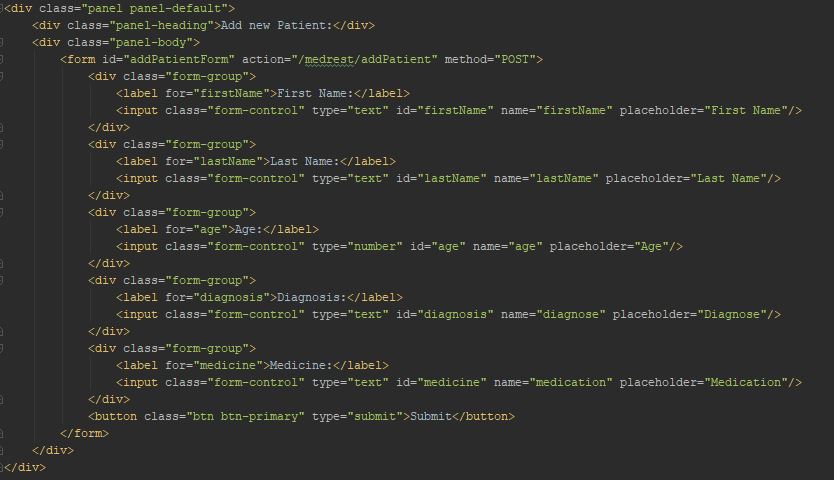

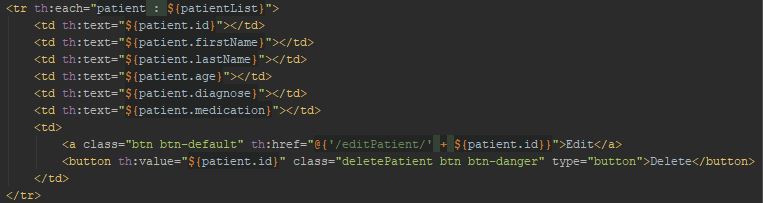

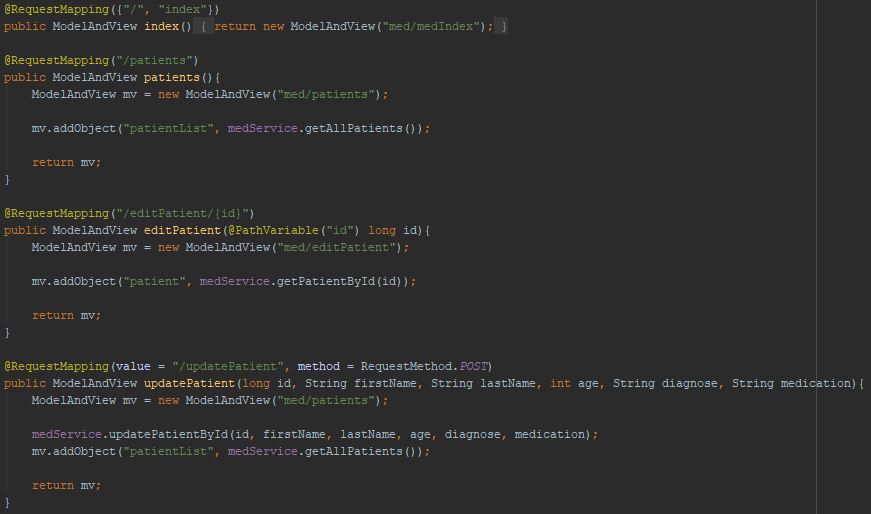

For the implementation of the webpages Bootstrap and Thymeleaf were used. Bootstrap offers predefined designs and Thymeleaf offers easier access to the data of the responses from the server.

With certain keywords like panel-default, panel-heading or panel-body, predefined designs can be accessed.

And the result would look like this:

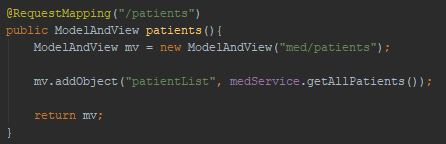

As for Thymeleaf the object which will be used is forwarded by the server and the client access the object with ${…}:

A controller takes care of the routing of the pages, through REST APIs.

But there are more REST APIs for various methods. For more information you can download the source code from https://gitlab.dke.univie.ac.at/la.dennis/BacNaoProject.git

Results

Resulting of the implementation the user can add, update or delete data concerning the patients, tasks and environments through the terminal.

If the user presses the sensors the following will happen:

- front sensor of head – the NAO will speak all current tasks

- rear sensor of head – the NAO will not speak anymore, until the programme is rebooted

- middle sensor of the left and right hand – with these two sensors the user can go through the list of tasks

If the user say the keywords:

- “environment” – the NAO will describe the current environment

- “task” – the NAO will recite all current tasks

Furthermore, if tasks are selected and executed the NAO will comment the start of the task.

To see the results a screencast and a demo video of the NAO is provided below:

Screencast:

Demo ideo:

For further development, this experiment can be extended, e.g.

- the XML import can be implemented, to easily add a environments

- to let actually self driving robots move, as soon as the tasks are executed

- extend the functionality or behaviour of the NAO, give warnings if something do not work accordingly within the system

The source code can also be used as a base to develop in another direction, e.g. where the NAO not only support the communication but also help with the task or help designing efficient models.