Use Case

Make tag detection and AR Technologies more accessible by exposing them in a JavaScript-Based Development Environment.

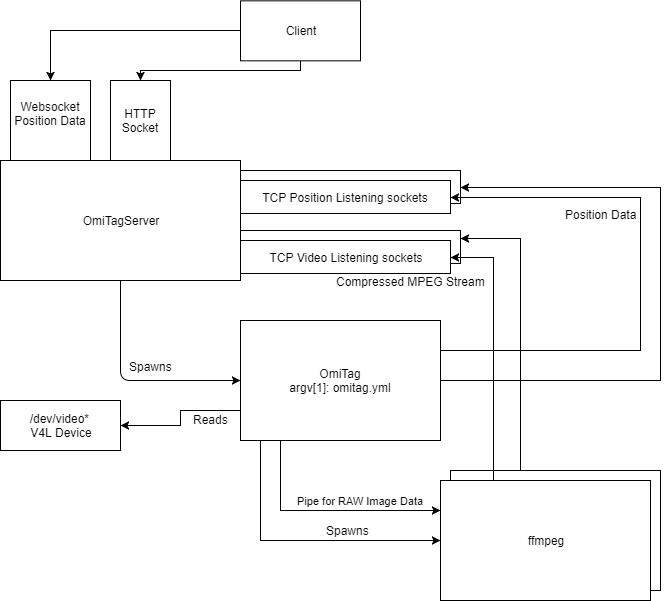

Project consists of multiple Subsystems:

- Web-Based Development Environment to implement Use-Case

- Video Stream of Multiple Cameras to support AR-System

- Tag-Detection provides position data via a websocket

Code is available on DKE GitLab: https://gitlab.dke.univie.ac.at/OMiROB/OmiTagServer

Future Work:

- Finish Web-IDE (Integrate Three.js)

- Develop Use-Case Scenarios

- Implement Custom Scenarios

Projected/Virtual Camera

A Virtual “Projector” is created by using a shader to apply the video texture(s) onto a 3D-Surface.

Scene Camera could then be freely positioned, creating the illusion of a projector/torchlight for each webcam.

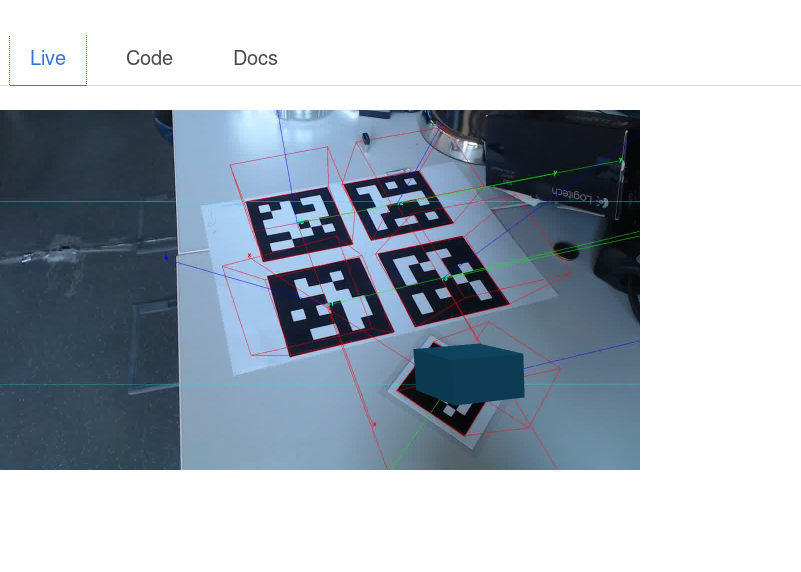

Experiment

Results

Tag Detection

OmiTag provides local position measurement using printed markers.

Preferred dictionary is ARUCO_MIP_36_H12, which provides a minimum hamming distance of 12 encoded in 36bit marker.

Augmented Reality

FFMpeg encodes camera stream to MPEG-1, which is served to multiple clients by a java websocket server.

The MPEG-1 Stream is decoded by jsmpeg in the web browser.

3D-Environment

Three.js provides hardware accelerated drawing in a canvas on top of the ffmpeg stream.

Camera Calibration

Print the camera calibration board and run

aruco_calibration live aruco_calibration_board_a4.yml camera_result.yml -size marker_size

Press 'a' to add the visible pattern to the pool of images used for calibration

Press 'c' to perform calibration

Use 's' to start or stop the video capture

Resulting yml file has to be referenced in omitag yml config

Setup: Omitag

Omitag provides video stream processing, detection and calculation of resulting marker coordinates. omitag.yml

%YAML:1.0

posServer: "127.0.0.1:1956"

videoDevice: "/dev/video0"

camParamsFile: "logitech_c920.yml"

markerSize: 0.028

ffmpegCmd: "/opt/ffmpeg/ffmpeg -f rawvideo -pixel_format bgr24 -video_size 1280x720 -framerate 30 -i pipe:0 -f mpegts -c:v mpeg1video -c:a none -b:v 1500k -bf 0 http://localhost:8090/stream/input/1"

headless: 1

xResOut: 1280

xRes: 1920

yRes: 1080

pointsScale: 0.001

points:

- {id: 115, x:95, y:100, z:0}

- {id: 197, x:433, y:95, z:0}

- {id: 23, x:705, y:95, z:0}

- {id: 85, x:95, y:405, z:0}

- {id: 3, x:95, y:605, z:0}

| Name | Description |

|---|---|

posServer | Target system for position data |

videoDevice | V4L Videodevice |

camParamsFile | File containing calibration data from camera calibration step |

markerSize | Width of marker in meters. |

ffmpegCmd | ffmpeg commandline to execute |

headless | 1: avoid using window system |

xResOut/yResout | resolution of images piped to ffmpeg |

xRes/yRes | Camera capture resolution |

pointsScale | Multiplication factor for coordinates. Resulting values are in meters. |

points | Coordinates of ref. tags. |

Setup: OmitagServer

port=8090 publicURL=http://127.0.0.1:8181 omitagCmd1=/path/to/omitag omitag1.yml omitagCmd2=/path/to/omitag omitag2.yml

| Name | Description |

|---|---|

port | Web Service listening port |

omitagCmd[1..9] | omitag commandline |