Use Case

Smart Drone Tourist Guide

The Smart Drone Tourist Guide is an autonomous drone which can be booked by a user for a sightseeing trip. The idea is it to create a model of a smart city path infrastructure in Adoxx and transmit the route to the drone. The drone starts following the path and when a tourist sight is reached the drone stops automatically and waits for a certain hand gesture from the person to continue the route. When the end of the route is reached, the drone safely lands at the destination point and is deactivated.

Code: https://gitlab.dke.univie.ac.at/a00851190/cmke_drone

During our work with the drone we gathered many resources which might be helpful to future developers, the links can be found here:

https://gitlab.dke.univie.ac.at/a00851190/cmke_drone/tree/master/resources

Adoxx Metamodel – Smart Drone Tourist Guide

The aim of the “Smart Drone Tourist Guide” Adoxx Metamodel is that the user can model his own sightseeing route. After modelling the route, the Adoscript can be executed and the user can again check which sights have been chosen and perhaps also erase some of them. Finally, the XML with the streets and sights is being generated and saved.

Adoxx Metamodel

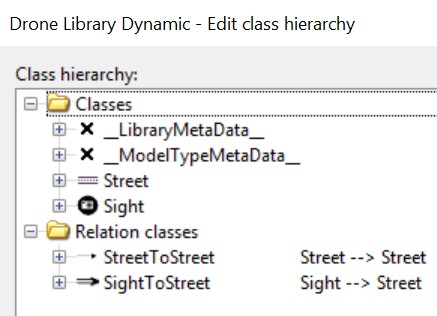

The Metamodel contains two Classes and two Relation Classes:

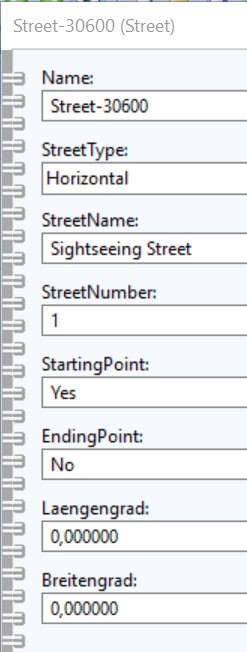

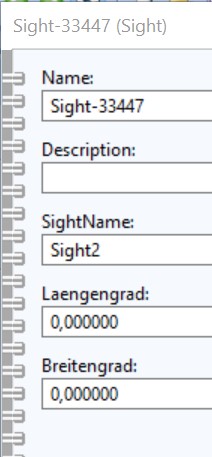

Attributes of the Classes

The Attribute StreetType can have six different types and is necessary for changing the notation look for the street and symbolises the turn in the road:

- Horizontal

- Vertical

- Top left

- Top right

- Bottom left

- Bottom right

The Attribute SightName may not contain any spaces.

Modelling Notations

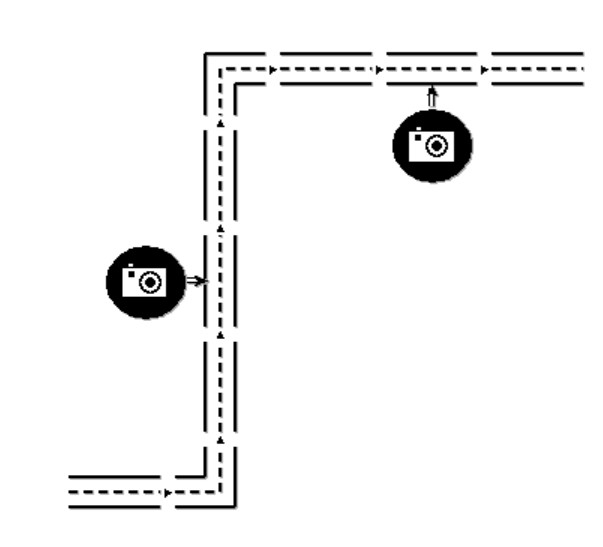

As described before, the notation of the street changes when the StreetType is changed. In this case the first street has the StreetType horizontal (fly straight), the second bottom right (turn left) and the other ones have the StreetType vertical (fly straight).

Drone Routing and Command Execution

Thanks to the Adoscript we are able to dump the current model in an XML file which is then processed by the routing.py script to find the right sequence of the route and the points of interest i.e. the positions which are placed next to sights. Therefore, the drone would receive this route automatically and follow the route, hence accompany the customer along the sightseeing tour, while making a stop at each sight.

For all these tasks we used python scripts and with the help of the “beautifulsoup” package we were able to parse the xml file and generate the desired flying route. Furthermore, we used provided packages by a project (https://github.com/amymcgovern/pyparrot) on github to interact with the drone and send the appropriate commands, thus realizing the Use Case.

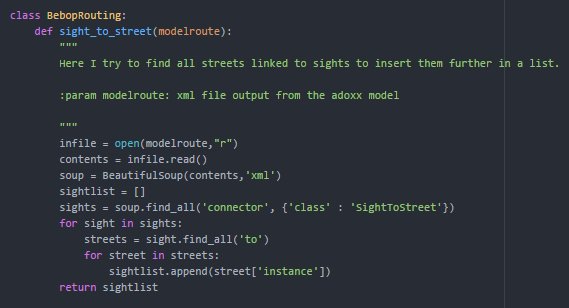

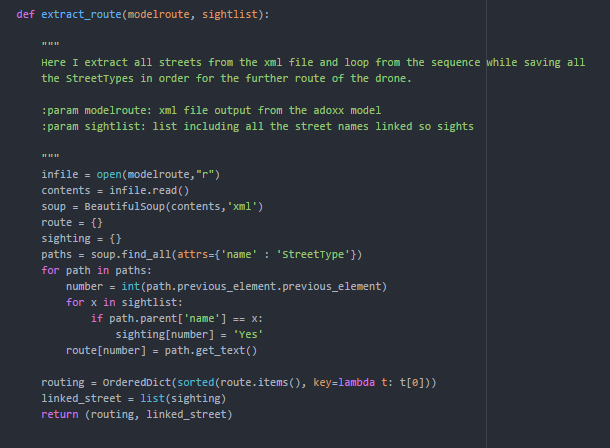

First, we start with the routing package which translates the xml into a python dictionary which will later serve as the routing sequence for the drone.

The illustration above shows the search for all streets in the route provided by the model’s xml which are linked with a sight. For those point of interests we want the drone to stop, land and wait until the customer has viewed the respective sight. The result of this method is a list which includes the streetnames which are used as in the following.

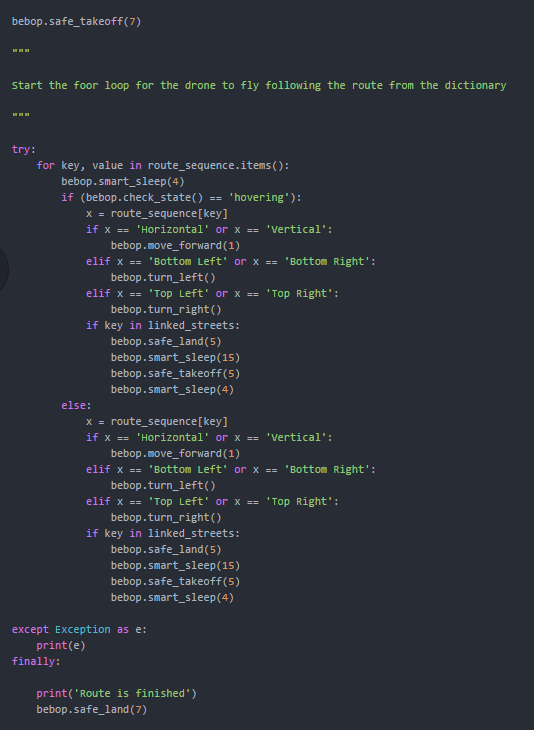

As shown in the next illustration, the method uses the list from before to specify the right sequence in which the streets from the model should be flown over and where to make the stop for the user visiting the sight.

As shown above the result is a list with the street numbers which are linked to sights and require a stop, and a ordered dictionary with the streets to fly. This is represented by the street number as the key and the street type as the value. The latter will decide which command should be send to drone as described in the following.

As shown below the drone is started and takes-off. After this it will receive different commands according to the street type in the for loop with the smart sleep function in between to guarantee that each part of the sequence is executed. For example for the street type “horizontal” or “vertical” the drone will simply move forward for 1 meter and make a left turn if the value in the dictionary was “bottom left”/”bottom right”. If a sight has been detected on the street it will perform a landing command and wait a certain time before taking-off, and continue the flight. Finally, the drone will land if the route is finished.

Hand Gesture Recognition

The user is interacting with the drone via hand gestures to signal the drone whether the user is finished or not. We approached this task by splitting the problem in three subproblems:

- Hand Detection – Detect one hand in an input stream from the drone via HAAR object detection algorithm

- Hand Tracking – If detection was successful, track the hand via KCF tracking algorithm. If the tracker loses the hand, the detection is run again.

- Gesture Classification – Crop the tracked hand from the input frame and classify the image as “GO” or “WAIT” via a Random Forest.

For all these subtasks we used Python, opencv and scikit-learn extensively. Additionaly, we used multithreading and processing queues to minimize the lag between frames. We also installed CUDA for our NVIDIA GPU to improve the speed of opencv’s image processing. To setup the streaming from to the drone to the laptop we used ffmpeg to redirect the stream to a UDP port which can be processed via opencv. The code for this part can be found in the handsign_recognition folder on the omilab gitlab server. We tried to make it readable and heavily commented it.

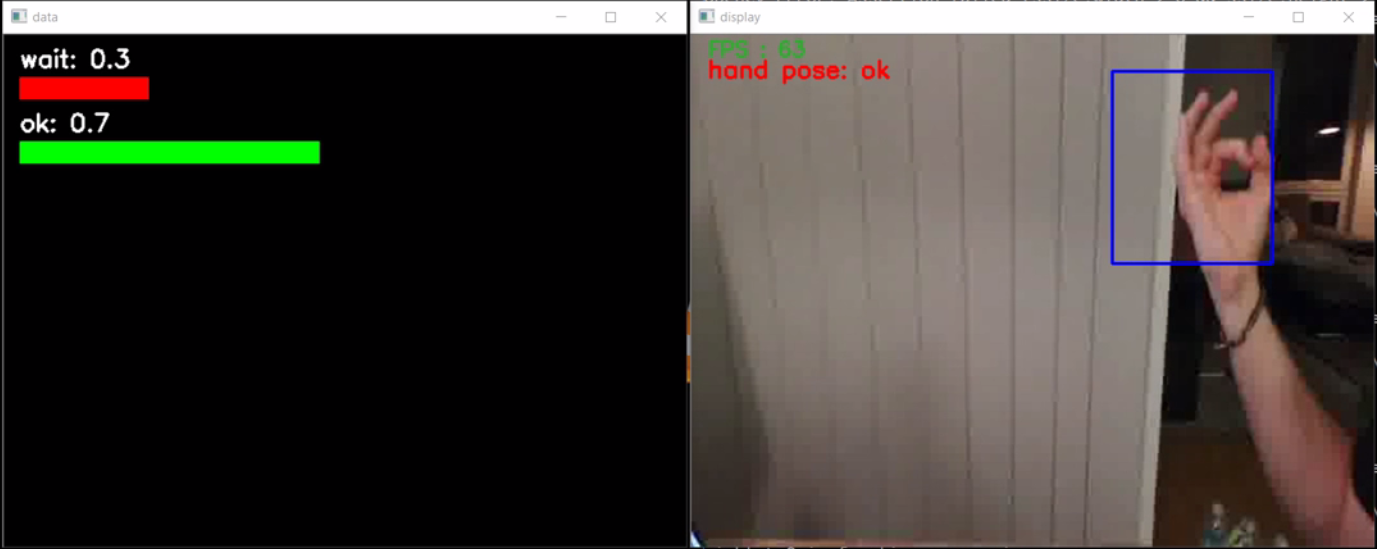

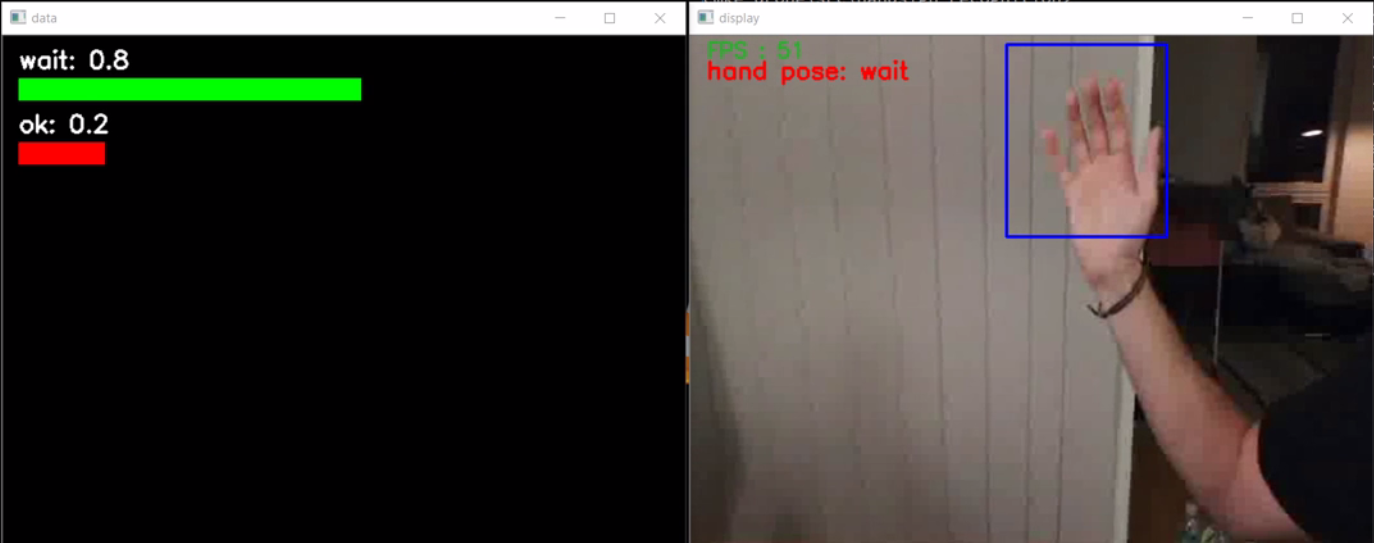

The two pictures below show one frame of the stream from the drone while the hand gesture algorithm is running. The blue bounding box on the right is cropped from the frame and send to the random forest classifier to get the predictions and confidence probabilities which are displayed on the left side:

Experiment

The Testing Model:

Due to testing and security reasons we have modelled a very simple route with only two sights. A long and complex route could be dangerous, because of limited space in our testing area. In our approach we didn’t use GPS Data, due to the inferior quality of GPS signals indoor. Nevertheless, we considered attributes for the GPS functionality within the metamodel. For moving the drone, we have used its relative position to send simple flying commands. When the drone reaches a sight, it should stop and wait for a hand gesture of the user and then continue the route. This is continued until the end of the tour is reached.

Results

Approach

The first problem what we had was to find a place where we can test the drone. As the Omilab at the University of Vienna is too small for testing the drone and as flying a drone in public area in Austria is in a strictly sense not allowed (just with license). We had to find a bigger private place where we can test the drone. Finally, we found the “Nordbahn-Halle” (https://www.nordbahnhalle.org), where they offered us an empty warehouse, in which we had enough space to test the drone.

Achievements:

As shown in the video, the drone receives the commands to fly along the modelled route created in the Adoxx Modelling Toolkit. What is more, the two landing sequences are triggered by the occurrence of the sight. For the other movements please have a look at the description above (Use Case). Therefore, we achieved the goal of establishing an interface between the model and the drone. Unfortunately, due to the time constraint and the problems we had for actual testing, we didn’t manage to test the drone together with the gesture recognition. This part is implemented but runs separately with the drone and is not integrated into the complete process. This would be one of the future improvements.

Improvements:

For future developers we want to highlight some points we would recommend to take under consideration or improve when working with the drone.

First, what would be important is to establish a method which checks if the drone is drifting, i.e. the drone is in hovering mode but keeps uncontrolled moving. Therefore, we already implemented a method in the Bebop.py, which needs to be tested further before it can be used on the drone.

Secondly, we would highly recommend to implement a function to track the internal state of the drone, because it seemed that a few sent commands were simply ignored. Additionally, there is a need for a function which copes with the lag between sending commands and receiving feedback from the drone. This would be essential for safely navigating the drone. We tried to take care of this problem through using a smart sleep to ensure each command is executed and none is left out. While this worked for some cases, it is still just a quick fix and should in future be handled differently.

Youtube-Video