Use Case

In the near future intelligent robot assistants might become reality. Therefore, interactions between humans and computers are becoming more and more common. For robots to live alongside humans they need to possess some form of emotional intelligence. In this project we look at how we can utilize the information harvested from natural language processing (NLP), to determine someone’s sentiment. We especially look at how emotionally intelligent a machine can become. The goal of this project is not only to develop a prototype which can interpret the emotions of a human in a conversation, but also to change the environment based on the detected emotions.

Having a robot as an intelligent assistant e.g. a NAO at home will be like having a butler. He can run to different rooms, ensure everyone is safe and report anything suspicious. It makes sense for the robot to be connected to a home automation software, so the the robot can directly control light, temperature and other environment variables. But, not only is the NAO interacting with the different devices inside of the house, he is interacting with the people that live there. For that, NAO needs emotional intelligence. In this project we want to show case how these two areas can be connected in a meaningful way. Our goal is to have the NAO robot interpret emotions from a conversation and then change the environment accordingly. We want this interaction to be standardized by a model. This means that the robot will engage in a conversation, detect the mood and then check a model to know how to react to the detected sentiment. To give an example: Specifically, the robot should change the color of the lights (ambient lighting) and also play a song that to reflect the mood of the conversation.

Experiment

DevOps

In order to start developing with the NAO it is necessary to install the NAOqi SDK. It is possible to develop with the NAO in three different languages. This project was done in python but java and c++ are also possible. Below we will go through the steps to setup the NAO to develop with python and macos. Our git can be found here and any issues here.

Setup

To download the naoqi SDK you need to go to the community softbank website. An account is necessary, if you register, any account has access to the downloads. On the left there is a filter type, which can be used to filter for the NAO robot (don’t install software for pepper). There you can download the SDK for your development language. If you scroll down, under other resources it is possible to download the most recent documentation.

Once you have the SDK and the documentation lets dive right into developing for the NAO. Open the folder with the documentation e.g. aldeb-doc-x.x.x-x.xx. There you will find an index.html file, open it to get started with reading the documentation. On the index.html page go to Installing Python SDK. There you will see the instructions for installing on your OS.

For python and mac it is unfortunately only possible to develop python with the built in python 2.7.xx version located at /usr/bin/python.

Use the release of Python 2.7 that comes by default with your MacOS.

Do not use a Python version downloaded from Python.org.

To install packages for this python distribution use /usr/bin/easy_install or /usr/bin/local/easy_install.

Setting your environment variables can be done by editing your .bash_profile. Add these two lines:

export PYTHONPATH=”${PYTHONPATH}:$HOME/pathto/naoqiSDKLibrary”

export DYLD_LIBRARY_PATH=”${DYLD_LIBRARY_PATH}:$HOME/pathto/naoqiSDKLibrary”

Now you should be all done setting up, if there is anything else try checking the forum.

Run your first code on the NAO

To start working with the NAO you need to start it up, The big button in the middle of the robot (on his chest). Press that and give it 2-3 minutes to start up its engines. Once the robot is running make sure you can connect to it. This can be done via the browser. You need to know its IP address. Enter the IP address into the browser (make sure you are connected to the same WIFI). If the NAO is running the browser should return an overview page of the NAO.

After you managed to get the NAO up and running lets try and connect to it via the naoqi SDK. Start /usr/bin/python on your MAC or your python distribution on an other OS. Lets try and make the robot talk, the code for that is below. Just copy and paste it into the dynamic python shell. The broker creates a persistent connection. The NAO_IP is the ip address of the robot, tts is the text to speech library. Further, tts.say is the command to make the robot speak. You have now written your first programm with the NAO.

from naoqi import ALProxy

from naoqi import ALBroker

NAO_IP = "192.168.75.41"

myBroker = ALBroker("myBroker", "0.0.0.0", 0, NAO_IP, 9559)

tts = ALProxy("ALTextToSpeech")

tts.say('Hello, World!')It is also possible to connect to the nao over ssh. This can be done via the command ssh e.g. ssh nao@192.168.75.41. To copy something to the nao use scp e.g. scp test.wav nao@192.168.75.41:test.wav.

Our Implementation

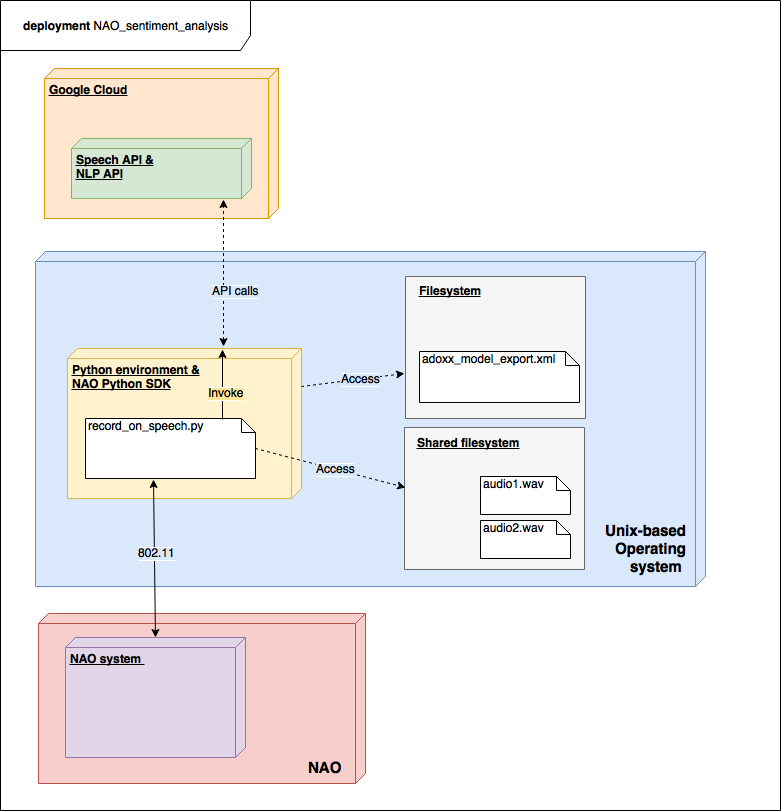

For our implementation we used the NAO framework described above, google cloud and a philipps hub to connect to the light(s). Our code implements the onWordRecognized event, which causes the NAO to listen for 5 seconds and then analyze the emotion of the recorded audio file. With a ADOxx model we defined how the robot should change the environment depending on the resulting emotional quotient.

To run our code use /usr/python hello_detection.py. The required installations and changes that need to be made are listed below:

Change all of the API keys to your google cloud API keys and update your phillips home API key using the phillips hub. In the code the relevent variables are listed: uri_cl1, uri and uri_sent. Install the google api to use gsutil and gcloud. Further, you need sox to convert audio files. Lastly, the python library requests is necessary.

Possible improvements

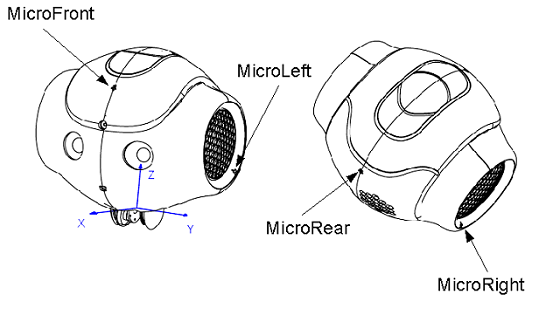

Have the NAO analyse the audio directly without converting it to text. There are a few libraries that we looked into but we could not find a satisfactory solution for our use case. We looked into some of the solutions discussed here at researchgate. Most of their code is written in C++ and a lot of it is not maintained well. Further, the speech recognition could be improved. The NAO currently listens for a single word, but it could also listen for a specific word in a sentence. On the other and, another approach could be taken, in which the NAO constantly record his surroundings and analyzes at a real time rate.

Results

In the following video it is possible to see how successful the experiment is. There are multiple steps that are done. In the first step, the NAO listens for the word “home”. Once the word is recognized NAO listens i.e. records for five seconds. Once the recording is done it is sent to our environment from which we perform a sentiment analysis. Next, with the resulting value of the sentiment analysis the NAO is told to play a song. The song is chosen depending on a Model that has been developed with ADOxx. Further, the environment is also changed (the lamp) according to the scenario configuration in the model. Finally, once the song is done playing, the lights of the NAO also change to the color of the lamp.