Use Case

The aim of the project is to provide a system, which records and streams a table soccer game and analyses certain things in parallel and in real time. Its inputs are video data from cameras and acceleration data from suitable sensors. The outputs are details about the position of the ball and statistics about players movement and their power by hitting the ball.

The whole project divides in three specific Use Cases:

- At first a prototype of the system gathers live video data from two separate cameras each recording one half of the table. The system spots the ball by using image recognition and delivers information about the balls position more precisely on witch half of the table the ball is.

- In the second Use Case part the system gathers player specific data by an acceleration sensor to compile statistics. In the process the software calculates the shot speed by measuring the acceleration of the handle bar.

- The third part of the Use Case based on the first Use Case part. It‘s goal is to show the exact position of the ball in a model. Therefor the systems uses image recognition as well as in the first Use Case but the output is picture data where the user can see the position of the ball actually and in real time.

Experiment

First part of the Use Case (image recognition)

To realize the first part of the Use Case image recognition software is needed. OpenCV, an open source library for image recognition fits the needs perfectly and is free of charge as well. One advantage of the library is the versatility in programming languages. It‘s written for C, C++, Python and Java. Another advantage is the accurate documentation (https://docs.opencv.org/) and the mass of documented research and development already done. For the implementation of the Use Case descripted above Java is used as programming language.

As written before the system includes two cameras each recording one half of the table for the first part of the Use Case. It was planned to use a Canon EOS 750D digital single lens reflex camera and the attached iPhone camera of an iPhone 5S. A HP ProBook 450 is used as a host of the system. Problems already occur at the very beginning of the experiment because the system doesn‘t recognize the associated cameras as image devices but as mass storage devices. That‘s why HPs attached webcam is used during the further procedure in the first part of the Use Case.

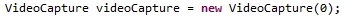

The source code for the Use Case is available under https://gitlab.dke.univie.ac.at/edu-semtech/XSTImageRecognitionTableSoccer. For the first part of the Use Case three classes are needed (VideoStream, MyPanel, Tracking). VideoStream.java is the main class. It creates two frames showing the cameras picture with marked objects and a masked frame (black and white) to know why which objects are marked. The following code line access the web cam (0 is the index of the attached web cam).

To track the ball Tracking.java is used. This class contains several methods to change the mode of a picture. In this example at first the colour mode is been changed (hsvImage()). The resulting picture is switched in a gray scale picture (getGrayFrame()), which is been masked (getMask()), means spotting things in a specific value scope. markobject() is looking for the contours of the masked frame and draws them in the original picture.

Second part of the Use Case (accceleration measurment)

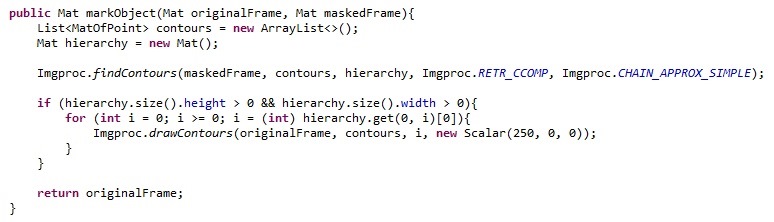

To realize the second part of the Use Case an independent system has been implemented. It uses one MPU6050 gyro and acceleration sensor which is fixated on one of the tables handle bars.

The sensor is connected to a Raspberry Pi Zero on which a python script is processing the data. The following tutorial shows how to connect the sensor with the Raspberry Pi and gives a short script example to read sensors data: https://tutorials-raspberrypi.de/rotation-und-beschleunigung-mit-dem-raspberry-pi-messen/.

Third part of the Use Case (model transformation)

The third part of the Use Case based on the first part. Because of the troubles with the camera it‘s been decided that the third part uses existing video data instead of live video data. Therefor the Canon EOS 750D DSLR is used and positioned on one longitudinal side of the table. To optimize the output the white side parts on the inner side of the table have been covered with dark board.

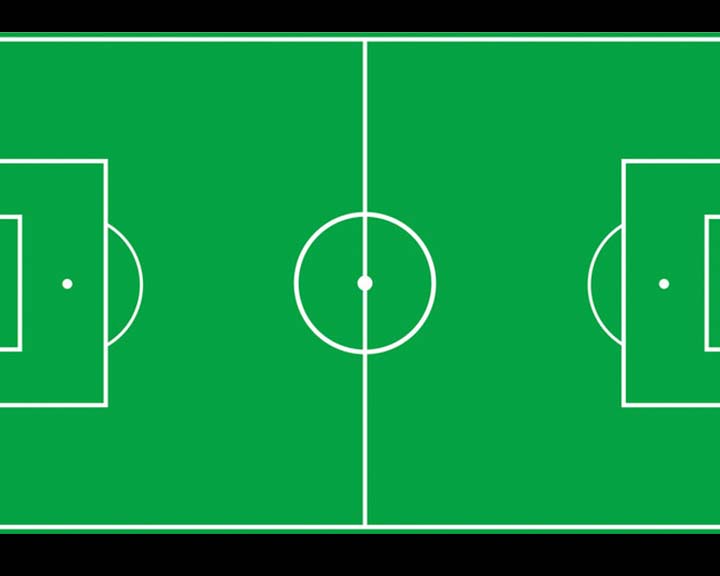

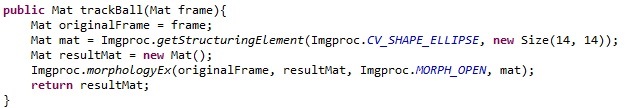

The software of the first part can be continued to use, especially MyPanel.java and Tracking.java. The new main class „MyVideo“ opens two frames. The first simple shows the video from the game. The second one shows a sketch of a soccer pitch in exactly the way the video shows the original table (same perspective). On this picture the position of the ball is marked. Therefor trackBall() getting a masked frame and is looking for white ellipses with a particular size.

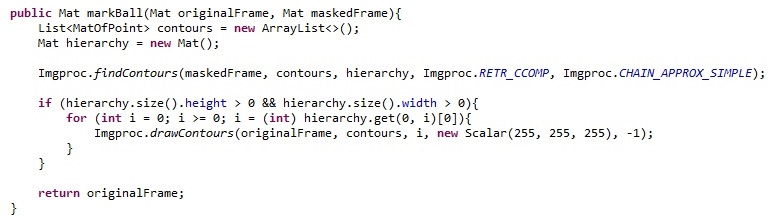

To transfer the ball to the sketch markBall() combines these two pictures.

Results

The first part of the Use Case poses some problems. First of all the quality of the webcam is not good enough to us it for image recognition. Also a problem is the position of the camera: it‘s difficult and probably not possible to record the whole table with an attached webcam. So the focus switched from recording a table soccer game to track a white ball on a dark background. During tests it conspicuous that the system worked faulty and under the same light properties only.

The second part of the Use Case should deliver acceleration data. In fact it does, but it‘s hard to trust the data, because there is no comparison. The sensor it‘s self-delivers six values for acceleration: for each of the three axis a high byte and a low byte. The script mentioned above should calculate the acceleration in g (units of acceleration due to gravity) and minimize the range of fluctuation. But the test results show acceleration values even if the sensor stands still for a while. For continued developing it could be useful to look for an existing library to focus on higher level calculation.

The third part of the Use Case shows the best results. It‘s possible to track a ball from video data and copy the position to a model with the software components explained above.

Nevertheless the system has room for improvement. A problem for the system is to detect the ball if it hides under a bar or a player clamps it. An option for further development would be to calculate the position of the ball by it‘s speed (compute it by data of an acceleration sensor for example) and use this computation for the moment the ball is invisible. However for further development of the system a more powerful host system should be used. The results show that the video seems like to be in slow motion because the calculation per picture carries out slower than it should.

Future Work

There are a few possibilities to enhance the project and use the experiment and the results for future work:

- further development of the algorithm to detect the ball

- expand the system for other kinds of sports

- detect the pitch with image recognition also

- generate players statistics with sensor data