Use Case

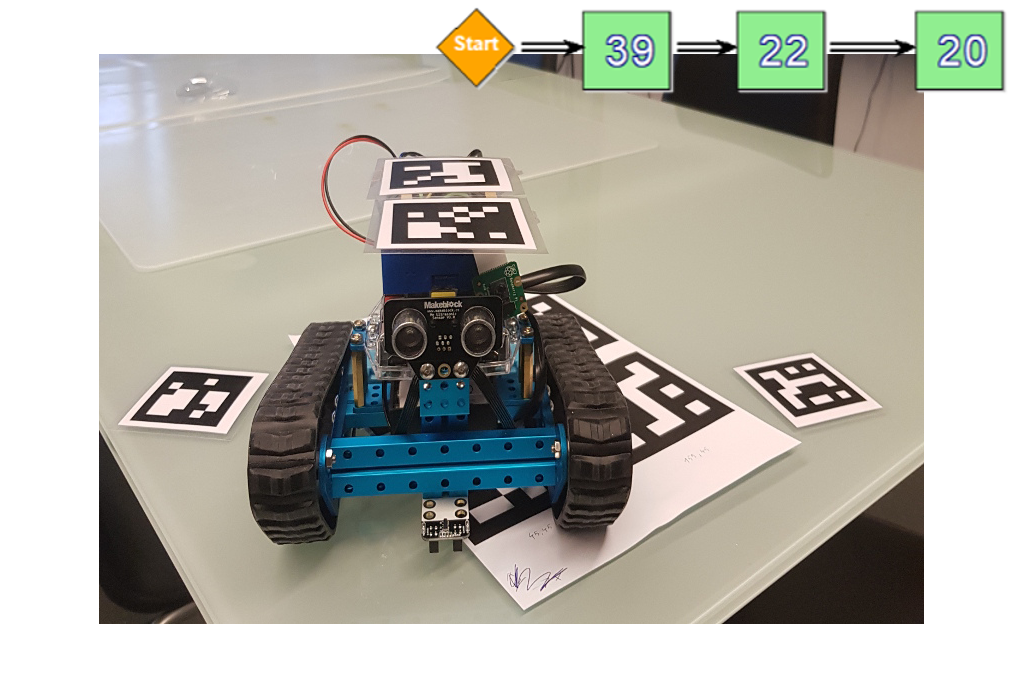

MBot Path Finder

has been developed by using the MBot Rover, the OMiTag Project and the ADOxx modelling interface

Use Case Description: Following a path which is modelled in ADOxx, and recognizing objects at the reached destination

The basic idea of the Project is to provide a system, which controls robots with the help of a camera system, to get to a specific location and check if an item is located at the target location. The path the robot should take will be modelled in ADOxx. After the execution the robot approaches one location after another with the help of a camera system which provides information about the locations. At the destination point it should be checked if an item is present and identify it.

The implementation of the use cases had a sequential approach, where each use case extended the use case before.

Use Case 1: Implementing a REST client which provides the control of the MBot Rover and drive to predefined locations from a default state

Use Case 2: Implementation of a path finding algorithm that takes information from the OMiTag Server and calculates the movement to the next location modelled in ADOxx

Use Case 3: Recognition of Items which are possibly placed on the destination spots

The idea of the project is a possible implementation of an algorithm which would be used in a warehouse situation.

Problem Statement:

- Easy to use coordination between the modelling method and the robot

- Process a continuous stream of information

- Calculating the needed movements in real time

- Use of image recognition in a dynamic environment

Experiment

By making use of a video camera the the OMiTag project is able to return the 3D coordinates of the different tags. A WebSocket interface is provided for accessing the data. For this purpose, a WebSocket client has been written which connects to the OMiTag server and retrieves a continuous stream of data. Every data point within the stream is identified by the tag ID thus facilitating the distinction of the points within the cameras range.

For identifying the position of the MBot two tags have been used which make it easier to detect the MBot and at the same time allow us to compute the necessary movements for reaching a desired destination/tag.

Algorithm description:

The main function for calculating the movement was atan2 which computes the angle between a point and the positive X axis. Thus, for determining the direction in which the MBot has to rotate the atan2 has been applied. The angles for the MBot and the destination have been computed and subtracted from each other. The correct position has been reached as soon as the difference between the angles reaches almost zero. For determining when to stop the original X coordinates have been subtracted thus as soon as the difference reaches approximately zero the MBot stops.

The 4 main components of the project are:

- The MBot which is connected to a raspberry pi which runs a RESTful Server.

- The Client which contains: a) a RESTful client, b) a WebSocket client, c) the movement computation.

- The OMiTag project.

- An ADOxx modelling method

The RESTful Server has been exported as a jar which can be run on the raspberry pi thus starting the server. Similarly, the Client can be exported as a jar which takes an array containing all the destination points as parameter. In the ADOxx modelling method, the locations which should be checked will be modelled and executed. The execution of the model passes the IDs as parameters for the

Description overall workflow:

- The OMiTag determines the position of the tags and sends the information stream to the Client.

- Based on the desired destination the movement path is computed and the movement action is sent to the raspberry.

- The raspberry executes the movement by sending the command to the MBot.

- Repeat steps 1, 2 and 3 until the destination has been reached.

DevOps Manual

Now a manual how to set up the project is given. The experiment was done in the OMiLab with a running OMiTag Server in the OMiLab network.

- Get the RoverServer from https://gitlab.dke.univie.ac.at/edu-semtech/RoverServer and build a jar using maven

- Deploy the jar on the Raspberry Pi on the MBot Rover and start it using java -jar

- Be sure that the motors of the Mbot Rover are started (press the small red button)

- Get the MBot Path Finder client from https://gitlab.dke.univie.ac.at/edu-semtech/MBotPathFinder

- The basic setup is done and can now can be used from an IDE. Because the client uses the IDs of the tags to find the location you have to pass the IDs as parameters. (Run configuration -> Arguments -> ID1 ID2 ID2 …)

- In the resources folder of the MBot Path Finder client an ADOxx abl is located, where you can model the path the Rover will take

- To use the ADOxx model to execute the algorithm you have to build a runnable jar of the client with the name mbotclient.jar and locate it on C:\

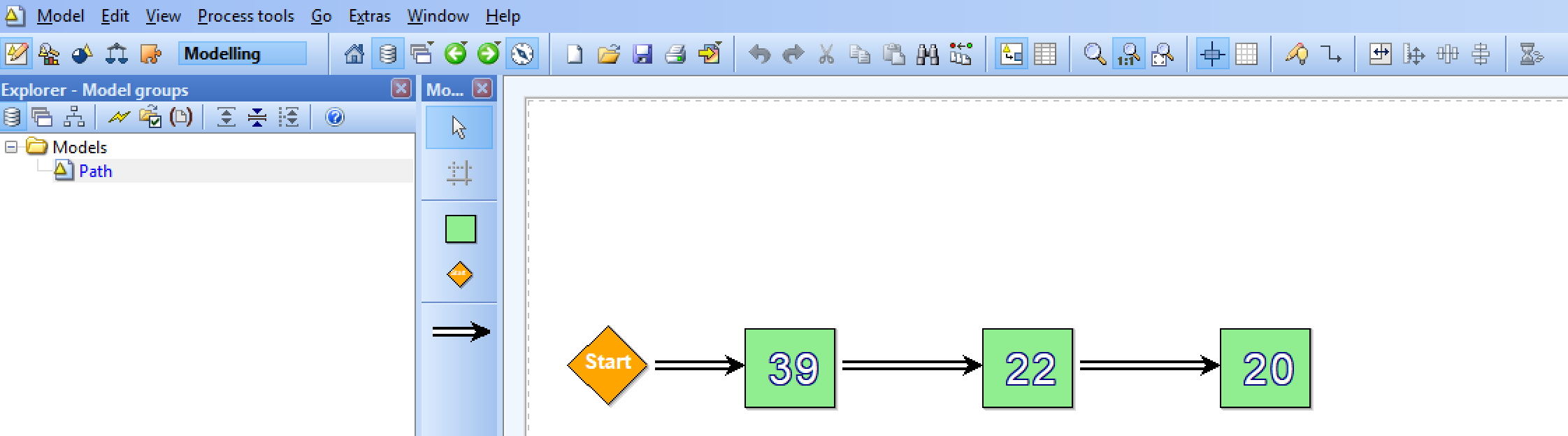

Modelling Method

The following example explains the Modelling method and how it works. The modelling method consists of one modelling type with the name “Controller”. This modelling type has two classes and one relation.

| Class | Explanation | Visuals |

| Start | Defines the startpoint for the path |  |

| Position | Is the location which the Rover should go to. Name has to be the ID of the Tag. |  |

| Relation | Explanation | Visual |

| Connector | Connects the classes |

The following example shows a model which after execution would start the Rover Path algorithm to go to the locations of the Tag with ID 39 then 22 then 20.

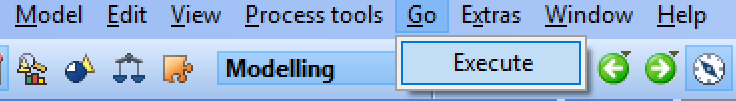

To execute the model press “Go” then “Execute”. You will be informed that the execution started.

This will call the jar and pass the parameters of the Tags to start the path finding algorithm.

Results

In this section a video is provided which presents the outcome of the experiment.

Setup:

The MBot is instructed to reach three different points of interested which are represented by three different tags. As soon as the MBot reaches his last destination it stops.

Problems:

- Positioning the video camera belonging to the OMiTag project at the right angle thus producing accurate readings. Room lighting can also influence the detection of the tags negatively.

- Choosing the right thresholds/tolerance for the positioning of the robot towards the next destination. Given the wrong parameters the robot would enter an infinite loop permanently trying to reach a perfect angle of zero.

- Supplying the MBot with sufficient energy which is needed to have a consistent movement pattern. Due to prolonged testing the batteries need to be repeatedly changed. Using a cord supplies the MBot with sufficient energy but at the same time makes the handling of the MBot more difficult especially when it behaves in an unexpected manner.

- Due to the extended effort which went into the development of use case 2 it was not possible to tackle the third use case.

Future Work:

- The MBot can only target destinations which have a higher X-Coordinate than the MBot’s. Adjusting the computation to facilitate the computation over the entire coordinate-system would be desirable.

- Determining the MBot to drive for a specified distance rather than covering the distance in small iterations.

- Making the MBot rotate based on a given angle rather than revolving around the z axis in small iterations.

- Implementing image recognition to check for items on the visited locations